Hey there! Welcome to BestBlogs.dev Issue #84.

Happy Chinese New Year! We took a two-week break for the holiday, so this issue is packed with extra content—take your time with it.

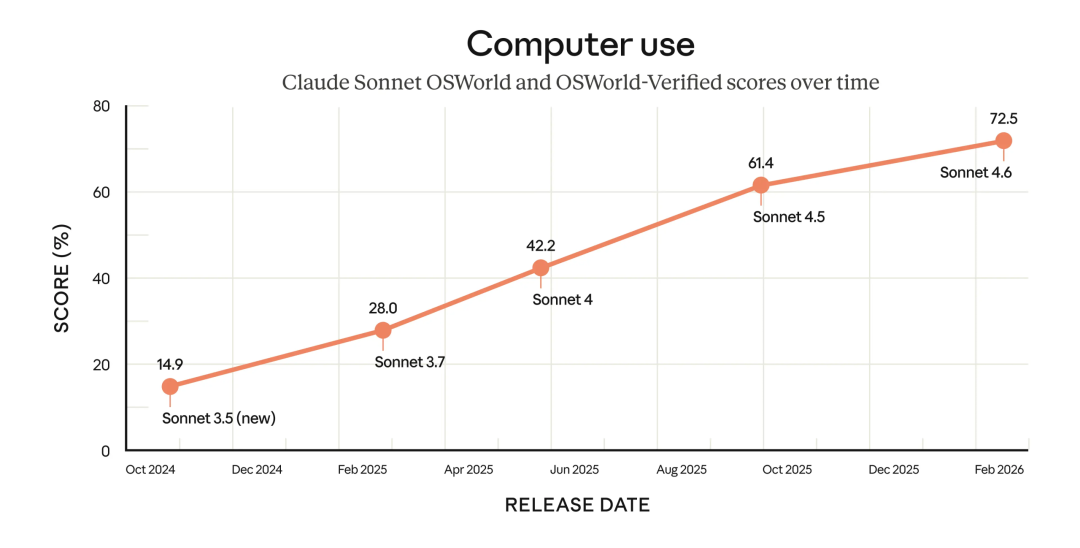

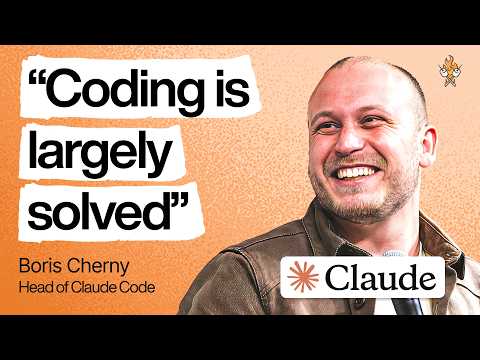

The most significant shift over the past two weeks isn't any single model topping a new benchmark. It's the accelerating transformation of the engineer's role—from writing code to orchestrating AI agents that write code . Boris Cherny, creator of Claude Code, says the programming problem has largely been solved. Engineers at OpenAI are already managing 10 to 20 agents simultaneously on hour-long tasks. Anthropic's trend report calls it a systematic shift from humans writing code to humans orchestrating agents. Meanwhile, Claude Sonnet 4.6, Gemini 3.1 Pro, GLM-5, and MiniMax M2.5 all dropped within weeks of each other. The stronger the models get, the more valuable orchestration and judgment become.

On my end, I've been deep in building BestBlogs 2.0's core features—orchestrating multiple AI coding tools and agents through Spec documents for requirement discussions, architecture design, demo development, and interaction reviews. Almost no hand-written code involved. Aiming for a late March launch, and I'll share more details then.

Here are 10 highlights worth your attention this week:

🏆 The model arms race is heating up fast. Claude Sonnet 4.6 brings a million-token context window and upgraded agentic capabilities, outperforming the previous flagship Opus 4.5 in 59% of real-world tests—at the same price as Sonnet 4.5. Gemini 3.1 Pro jumped from 31% to 77% on ARC-AGI-2 reasoning benchmarks, introduced three-level thinking modes for flexible compute allocation, and costs less than half of Claude Opus 4.6. More capability at the same price is the new normal.

🤖 GLM-5 and MiniMax M2.5 tackle the same question from different angles: how to make agents actually work in production. GLM-5 is designed around agent engineering from the ground up, achieving state-of-the-art open-source performance through asynchronous RL and sparse attention. MiniMax M2.5 pushes continuous agent operation costs below $1 per hour, making unconstrained complex agent deployment a practical reality.

🎨 Seedance 2.0 and Nano Banana 2 push boundaries in video and image generation respectively. Seedance 2.0 goes beyond generating visuals—it understands directorial thinking, autonomously handling storyboard design and emotional pacing. Nano Banana 2 slashes API pricing significantly, and while hands-on testing shows results aren't quite as impressive as the marketing suggests, it genuinely makes high-quality image generation accessible to everyone.

🛠️ Two interviews with Boris Cherny, creator of Claude Code, are the must-reads of this issue. He traces Claude Code's journey from a two-upvote internal project to powering 4% of GitHub commits. The core philosophy: build for the model six months from now, not today's model. He hasn't written a single line of code since Opus 4.5, and believes the next frontier is AI evolving from executor to a colleague that proactively suggests ideas.

⚡ OpenAI's engineering lead Sherwin Wu reveals how AI tools are reshaping engineering teams: 95% of engineers use Codex daily, PR output gaps between high and low performers reach 70%, and engineers who can manage 10 to 20 agents simultaneously are pulling far ahead. He also candidly notes that many enterprise AI deployments have negative ROI, and that the second and third-order effects of one-person billion-dollar companies are severely underestimated.

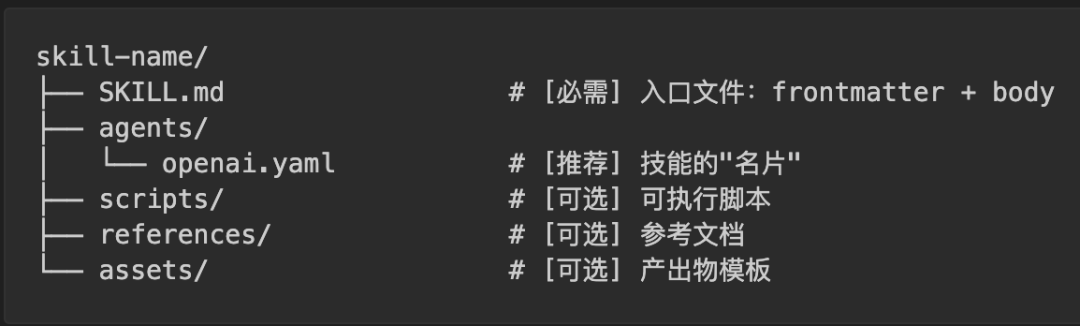

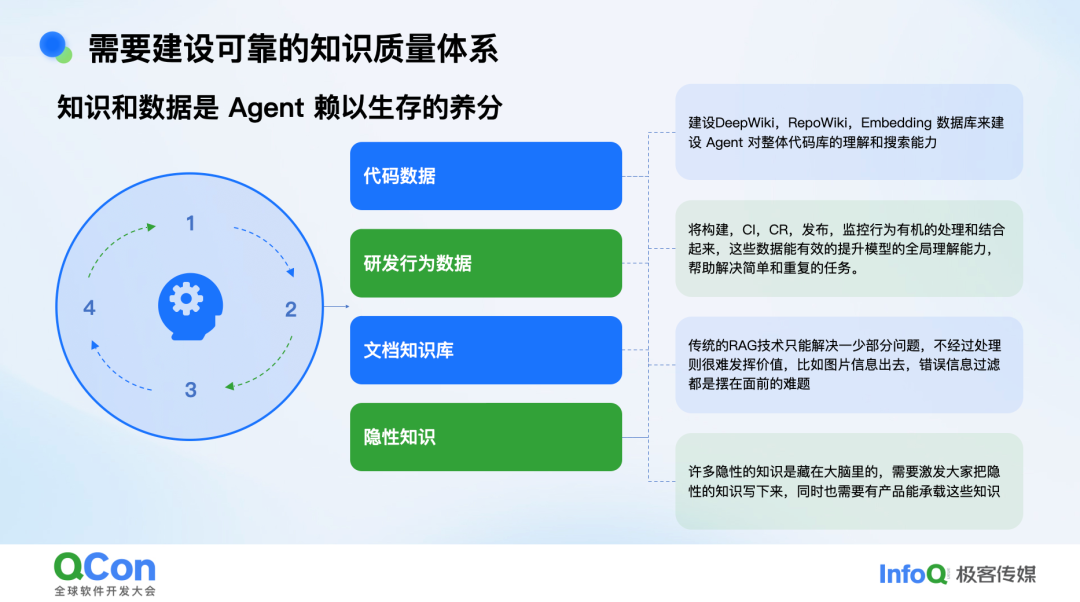

📁 The next frontier in LLM engineering is shifting from parameter tuning to memory. An InfoQ talk systematically covers memory layering, proactive scheduling, and mind-map-style information organization. The key insight: instead of reactively handling retrieval at query time, front-load memory management during interaction gaps so relevant memories are ready before the query arrives. Datawhale's breakdown of Skill design reveals a critical dividing line: lock down fragile operations with scripts, guide creative tasks with natural language.

💡 Vibe Coding is moving from concept to large-scale production. Alibaba's internal practice exposes real challenges—code quality consistency, debugging efficiency, and security vulnerabilities—while offering battle-tested solutions like templatizing successful paths and abstracting agents as reusable tools. Meanwhile, a product manager with no coding background built a personal AI agent on their own server in one afternoon using Claude Code, proving that product sense is scarcer than coding ability.

🧩 Anthropic's agentic coding trend report maps out a systematic transformation across eight dimensions: multi-agent collaboration, long-running autonomous tasks, and programming democratization among them. The core thesis: AI amplifies the judgment engineers already have rather than replacing it. System design, task decomposition, and quality assurance—the old fundamentals—are worth more than ever in the agent era.

🔬 Google's Chief AI Scientist Jeff Dean walks through the full arc from loading Google's entire index into memory in 2001 to TPU co-design, offering two key predictions: personalized models that attend to all of a user's data, and specialized hardware enabling ultra-low latency that will fundamentally reshape human-AI collaboration.

👨💼 The debate over whether AI will end software engineering continues. UML creator Grady Booch pushes back on Dario's claims, pointing out that software engineering has survived multiple existential crises—each time emerging into a new golden age. Naval offers a different angle: agency is humanity's real moat against AI replacement, because AI has no desires, no survival pressure, and can't make autonomous decisions in truly unknown territory. The only way to overcome AI anxiety is to open the hood, understand it, and then act.

Hope this issue sparks some new ideas. Stay curious, and see you next week!