Hey there! Welcome to BestBlogs.dev Issue #85.

One keyword threads through this week's articles: harnessing. Essays published on martinfowler.com argue that developers' core work is shifting from writing code to building the harness agents depend on—specs, quality gates, and workflow guides. A Chinese podcast title puts it more bluntly: stop working, start setting up the office for your AI. OpenAI's team shipped a million lines of Codex-generated code over five months, not by using a stronger model, but by enforcing structured knowledge bases and rigid architectural constraints. As agents grow more capable, the real competitive edge isn't whether you use AI, but whether you can harness it.

On the BestBlogs.dev front, we've been going deep on AI coding to build out version 2.0. The focus is custom subscription sources and personalized feeds, so everyone can shape their reading experience around their own interests. I'm also developing Skills on top of open APIs for content search, deep reading, and daily operations—all aimed at truly harnessing the future of reading.

Here are 10 highlights worth your attention this week:

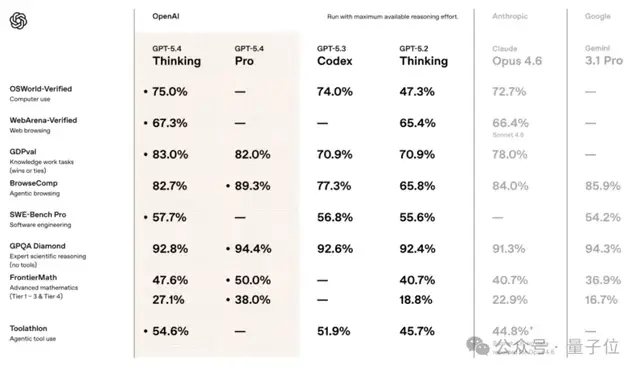

🤖 GPT-5.4 lands as OpenAI's first model to unify reasoning, coding, native computer use, deep search, and million-token context in a single package. The standout is native computer use: the model reads screenshots, moves the mouse, and types on the keyboard, surpassing average human performance on OSWorld desktop tasks. A tool-search mechanism cuts agent token consumption by 47%, achieving high capability and low cost simultaneously. Meanwhile, GPT-5.3 Instant optimizes for feel over benchmarks, reducing web hallucination rates by 26.8%—a meaningful step toward making ChatGPT a reliable daily tool.

🏗️ Two essays on martinfowler.com form a cohesive argument this week. The first positions developers "on the loop": the core job becomes building and maintaining the harness that agents run on, with an agentic flywheel where agents not only execute tasks but continuously improve the harness itself. The second introduces a Design-First collaboration framework, aligning on capabilities, components, interactions, interfaces, and implementation before any code is generated, preventing architectural decisions from being silently embedded by AI.

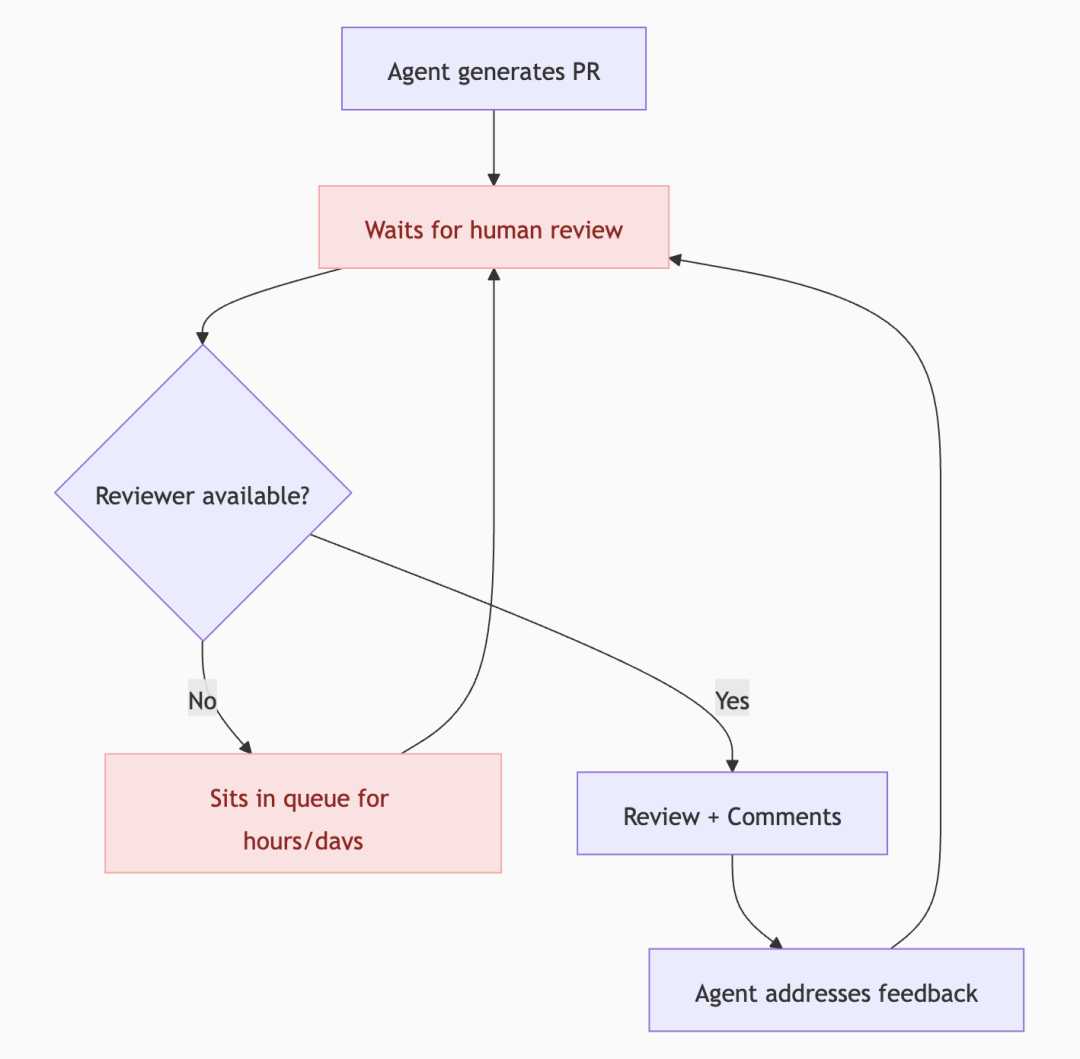

🎬 Pragmatic Engineer sat down with Boris Cherny, the creator of Claude Code, tracing its journey from an Anthropic side project to one of the fastest-growing developer tools. Boris ships 20–30 PRs daily, all 100% AI-generated, without editing a single line by hand. The conversation also reveals the internal debate at Anthropic over whether to release it publicly, how code review is evolving in the AI era, and the layered security architecture behind Claude Code.

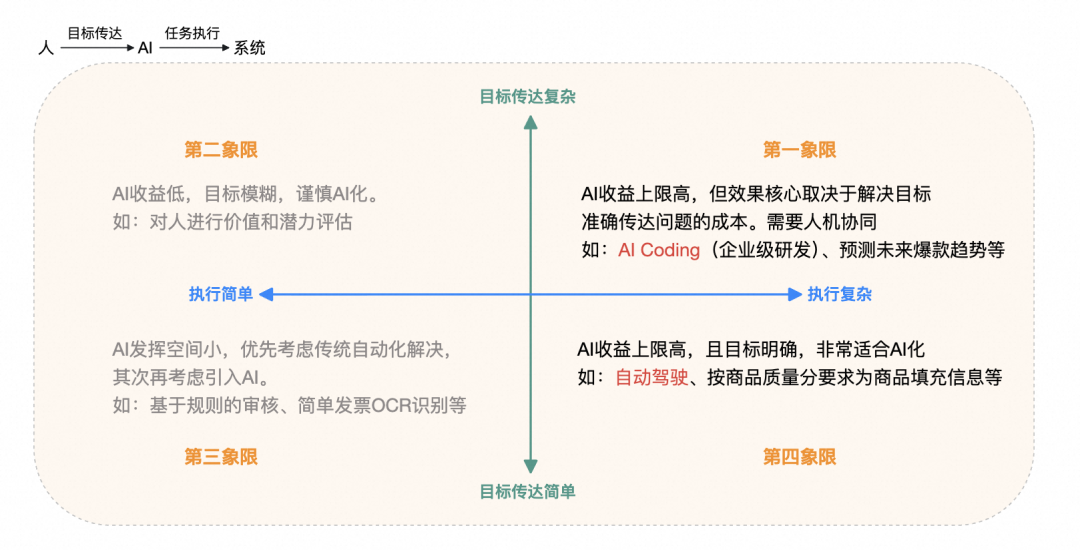

🔧 Alibaba's Tmall engineering team identifies the real bottleneck in enterprise AI coding: not agent execution capability, but accurately conveying complex task goals to AI. Their solution is a layered, unified expert knowledge base for systematic entropy reduction, driving a shift from tool-based efficiency to knowledge-driven intelligent development. OpenAI's Codex practice confirms the same insight: 1,500 PRs over five months with zero human coding, scaled through structured knowledge management, rigid architectural constraints, and periodic code entropy cleanup.

📁 Tencent Cloud published what may be the most thorough Chinese-language teardown of OpenClaw's context management, covering a three-tier defense system: preemptive pruning, LLM-based summarization, and post-overflow recovery, plus a cost analysis of each operation's impact on provider KV cache. Essential reading for anyone building long-session agents.

⚡ Small models are rewriting performance expectations. Qwen3.5 releases four models from 0.8B to 9B parameters, all Apache 2.0, fine-tunable on consumer GPUs. The 4B stands out for multimodal and agent capabilities, while 9B punches close to much larger models. Xiaohongshu's open-source FireRed-OCR takes a different angle, turning Qwen3-VL-2B into a dedicated document parsing model through three-stage progressive training, scoring 92.94% on OmniDocBench v1.5 and ranking first among end-to-end solutions, with support for formulas, tables, and handwriting. Both projects prove the same point: targeted training strategies beat brute-force parameter scaling.

🎨 Anthropic's head of design Jenny Wen shares a striking observation: the traditional design process is dead, not because designers chose to change, but because engineers shipping at AI speed forced the shift. Her time on polished mockups dropped from 60–70% to 30–40%, replaced by direct pairing with engineers and even editing code herself. Design work is splitting into two tracks: real-time collaboration supporting engineering execution, and vision design that sets direction 3 to 6 months out.

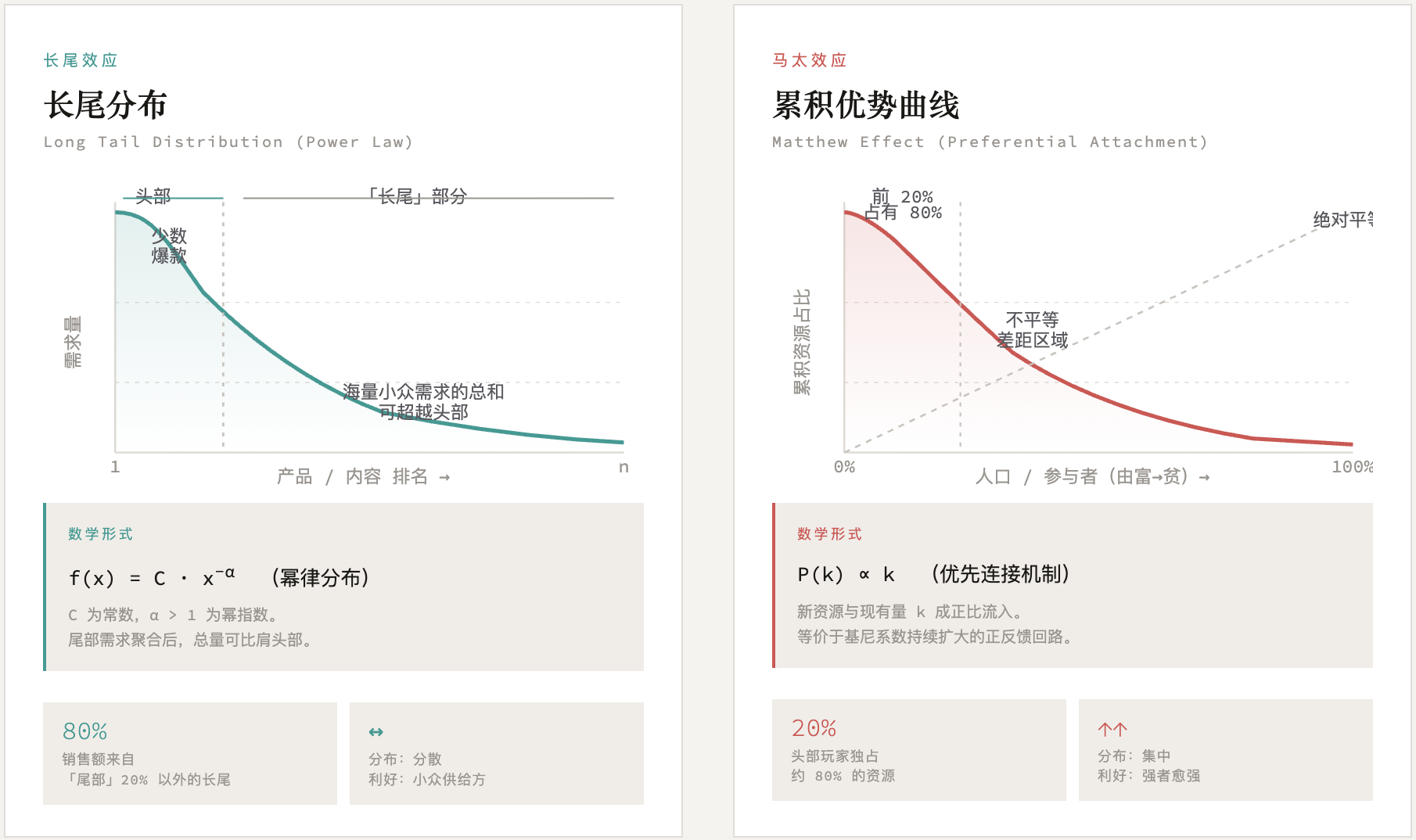

💡 A three-hour conversation between Meng Yan and Li Jigang starts from one powerful premise: the industrial revolution took away physical labor, AI is taking away mental labor, what remains for humans is heart force. The dialogue extends into the nature of vector spaces, business models shifting from weaving nets to digging wells, and education transforming from pouring water to lighting fire. Two insights worth unpacking on their own: "Your feed is your fate" and "prompts have shapes."

📈 Zapier's VP of Product shares first-hand lessons from running 800 AI agents internally, emphasizing that technology adoption and business transformation must be treated as separate efforts, and that leadership must personally use AI tools for transformation to stick. Insight Partners' co-founder goes further: autonomous agents are the real core of this wave, SaaS per-seat pricing will give way to consumption-based models, and white-collar job displacement will become an election issue within two years.

🌐 A thought experiment written from a 2028 vantage point deserves attention: white-collar job losses trigger consumer spending contraction, which triggers private credit defaults, which pressures mortgage markets, forming a negative feedback loop with no natural brake. Not a prediction, but a systematic framework for reasoning about left-tail risks. Worth a careful read for anyone thinking about AI's economic impact.

Hope this issue sparks some new ideas. Stay curious, and see you next week!