Hey there! Welcome to BestBlogs.dev Issue #86.

One word kept surfacing across every layer this week: infrastructure. On the tenth anniversary of AlphaGo, Demis Hassabis wrote a personal retrospective tracing the arc from Go to protein folding and mathematical discovery, then laid out a clear AGI roadmap: Gemini's multimodal perception is merging with AlphaGo's logical planning, evolving AI from a tool into an "AI co-scientist." On the application side, OpenClaw has officially surpassed React as the most-starred project in GitHub history—no longer just an open-source tool, but an agent operating system sinking into foundational infrastructure. From a solo developer's six-layer governance model, to three generations of enterprise code review, to Jensen Huang's AI "five-layer cake," this week's content collectively answers one question: when AI coding becomes table stakes, your real competitive edge comes from the infrastructure you build.

This week I focused on assembling a personal content workflow using Skills—connecting the full pipeline from content ingestion, curation, deep reading, persona-based content creation, multi-platform publishing, to analytics. The goal is to upgrade fragmented information consumption into a content operating system with a feedback loop. Still iterating, but I can already feel the qualitative shift that comes from wiring tools into a system—which resonates deeply with the core insight running through this week's articles.

Here are 10 highlights worth your attention this week:

🏆 On the tenth anniversary of AlphaGo, Google DeepMind co-founder Demis Hassabis wrote a personal reflection on the Move 37 moment and its decade-long impact. The real legacy isn't beating a human champion—it's validating a general search-and-reasoning methodology that was then transplanted into AlphaFold , FunSearch , and chip design. His AGI roadmap is clear: Gemini's multimodal perception combined with AlphaGo's logical planning, pushing AI from tool to autonomous "co-scientist."

🔮 Two major foundation models debuted this week. Gemini Embedding 2 is Google's first native multimodal embedding model, unifying text, image, audio, and video into a single vector space with 100+ language support and MRL flexible dimension compression—a critical upgrade for multimodal RAG architectures. NVIDIA Nemotron 3 Super fills the open-source gap for agentic reasoning: 120B parameters, 1M context length, and a Mamba-Transformer hybrid architecture delivering 5x throughput gains, making it the best open-source choice for complex, long-horizon multi-agent tasks.

🤖 Two foundational agent component studies are worth bookmarking. Tongyi Lab's open-source Mobile-Agent-v3.5 achieves unified GUI automation across desktop, mobile, and browser through a hybrid data flywheel and reinforcement learning, hitting open-source SOTA on 20+ benchmarks. Microsoft Research's PlugMem distills agent interaction history into structured facts and reusable skills, delivering higher-quality decision context with fewer tokens, outperforming traditional retrieval methods in dialogue and web browsing scenarios.

🦞 Professor Hung-Yi Lee's video "Dissecting the Lobster" deconstructs AI Agent architecture with textbook clarity: system prompts for identity, RAG and compression for breaking context limits, heartbeat mechanisms for 24/7 autonomous operation, and Sub-Agent orchestration for complex task decomposition. Tencent Engineering's hands-on guide complements this with a complete deployment path from hardware selection to multi-agent coordination, along with a key safety warning: any system pursuing high autonomy must plan for the worst-case scenario of full data exposure. Theory meets practice—together they form the best entry point for understanding the OpenClaw ecosystem.

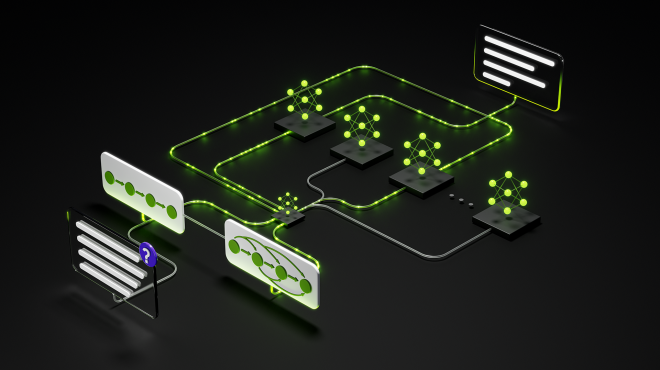

🏗️ After six months of intensive Claude Code usage, Tw93 distilled a six-layer governance model: CLAUDE.md, Tools/MCP, Skills, Hooks, Subagents, and Verifiers. The core insight: agent failures rarely stem from insufficient model capability—they come from context pollution, tool redundancy, and lack of deterministic constraints. HackerNoon's "Scalability Triangle" offers a complementary decision framework—MCP handles dynamic data interaction, Subagents handle task isolation and model routing, Skills handle static knowledge injection—with clear boundaries to prevent over-engineering. Read together, these two pieces are the most systematic treatment of Claude Code engineering practices available.

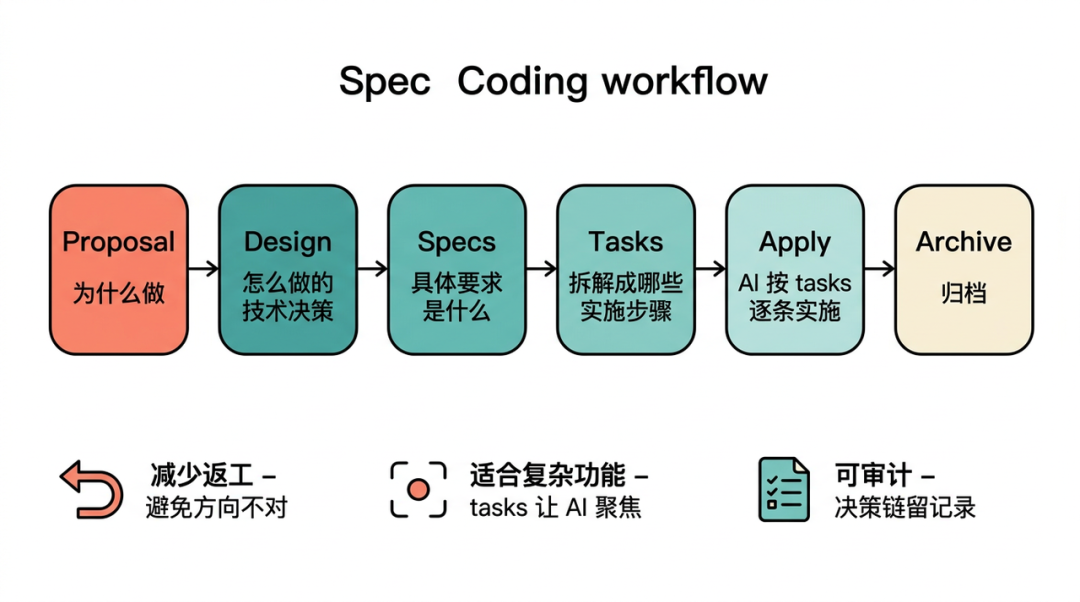

⚡ OpenAI's Build Hour and Dewu's Spec Coding case study showcase production-grade agent engineering from two perspectives. OpenAI proposes Harness Engineering with seven readability metrics, arguing that embedding agent.md rules in the codebase lets AI independently ship PRs. Dewu's team validated their three-layer specification system (Rules/Code/UI) with 2,754 real tool calls over 10 days: the 36% efficiency gain required systematic upfront investment in specs. The article also candidly documents where AI breaks down in complex CI environments—that honesty makes this field report even more valuable.

🔍 Kuaishou's intelligent Code Review is this week's most instructive enterprise case study. Three generations of architecture evolution—from LLM heuristics to knowledge engine plus deterministic rules, then to agentic autonomous decision-making—pushed code review adoption from 7.9% to 54%, cutting MR turnaround time by nearly 10%. The breakthrough: building 1,100+ hard rules to eliminate AI hallucinations, achieving a paradigm shift from personal assistant to organization-level collaborator. This evolution path offers direct lessons for any team pushing AI engineering into production.

🌐 Founder Park surveyed 500+ OpenClaw -related products on Product Hunt from February, spanning cloud hosting, Skill development, Agent social networks, and competitors. An entire ecosystem has emerged without top-down planning—completely bottom-up. OpenClaw isn't just a tool anymore; it's an operating-system-level platform. Meanwhile, LangChain argues that as implementation costs plummet, the software development bottleneck is shifting from building to reviewing. The talent landscape will bifold into full-stack "builders" and architecture-focused "reviewers," with product sense becoming the core competency across all roles.

🎨 Three articles interrogate the human position in the AI era from different angles. A YC design expert's retrospective on Vibe Coded websites reveals the homogeneity trap: over-reliance on LLMs produces cookie-cutter fade-in animations—AI is an execution lever, not a substitute for thinking. Elys founder Tristan offers another dimension: a person's soul is the sum of all their context, and AI social products must anchor one end to real humans—memory slots and entropy reduction are the true technical moats. Read together, they point to the same conclusion: the more powerful the tools, the more precious human judgment becomes.

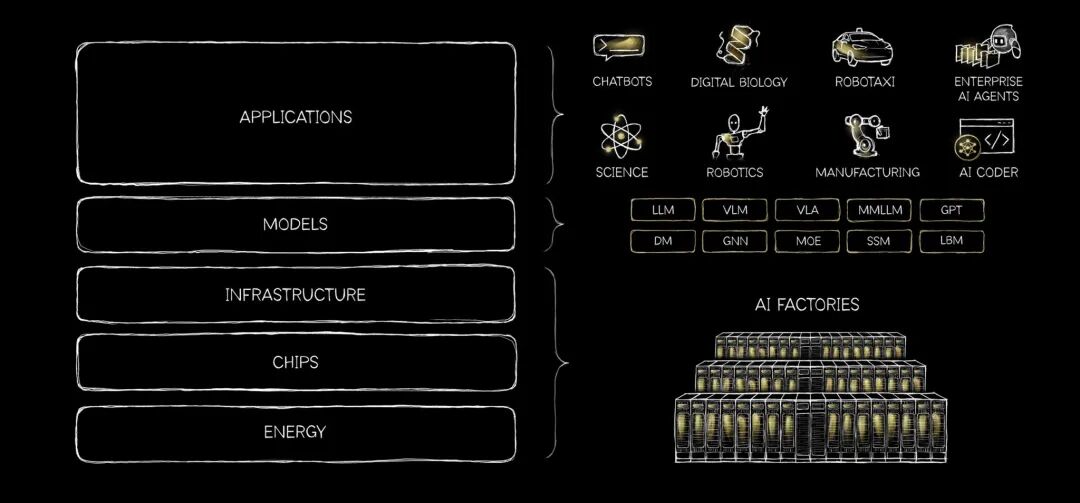

📈 Four macro pieces paint a panoramic view of AI. Jensen Huang's bylined essay deconstructs AI into a five-layer cake from energy to applications, arguing that open-source models are the catalyst activating full-stack demand. a16z's top-100 consumer AI report identifies personal memory as the next core moat. "2026 Letter to AI Founders" draws on the printing press, electric motor, and cloud computing to derive a law of profit conservation—when implementation stops being the bottleneck, value migrates to architectural judgment and product intuition. And a 70-page solo PPT deck delivers the most comprehensive data review of the Q1 2026 US-China AI landscape.

Hope this issue sparks some new ideas. Stay curious, and see you next week!